Evaluation¶

- class Evaluator(plot_scale='linear', tick_format=ValueType.LIN)[source]¶

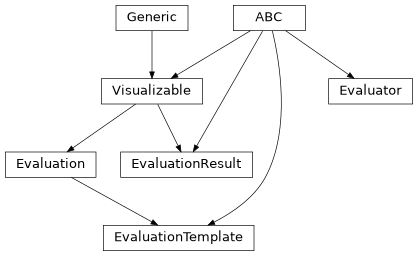

Bases:

ABCEvaluation routine for investigated object states, extracting performance indicators of interest.

Evaluators represent the process of extracting arbitrary performance indicator samples \(X_m\) in the form of

Artifactinstances from investigated object states.- abstract evaluate()[source]¶

Evaluate the state of an investigated object.

Implements the process of extracting an arbitrary performance indicator, represented by the returned

Artifact\(X_m\).Returns: Artifact \(X_m\) resulting from the evaluation.

- Return type:

- abstract initialize_result(grid)[source]¶

Initialize the respective result object for this evaluator.

- Parameters:

grid (

Sequence[GridDimensionInfo]) – The parameter grid over which the simulation iterates.- Return type:

Returns: The initialized evaluation result.

- abstract property abbreviation: str[source]¶

Short string representation of this evaluator.

Used as a label for console output and plot axes annotations.

- property plot_scale: str[source]¶

Scale of the scalar evaluation plot.

Refer to the Matplotlib documentation for a list of a accepted values.

Returns: The scale identifier string.

- class Evaluation[source]¶

Bases:

Generic[VT],Visualizable[VT]Evaluation of a single simulation sample.

Evaluations are generated by

EvaluatorsduringEvaluator.evaluate().

- class EvaluationTemplate(evaluation)[source]¶

Bases:

Generic[ET,VT],Evaluation[VT],ABCTemplate class for simple evaluations containing a single object.

- class EvaluationResult(grid, evaluator=None, base_dimension_index=0)[source]¶

Bases:

Generic[AT],Visualizable[PlotVisualization],ABCResult of an evaluation routine iterating over a parameter grid.

Evaluation results are generated by

Evaluator Instancesas a final step within the evaluation routine.- Parameters:

grid (

Sequence[GridDimensionInfo]) – Parameter grid over which the simulation generating this result iterated.evaluator (

Evaluator|None) – Evaluator that generated this result. If not specified, the result is considered to be generated by an unknown evaluator.

- abstract add_artifact(coordinates, artifact, compute_confidence=True)[source]¶

Add an artifact to this evaluation result.

- abstract runtime_estimates()[source]¶

Extract a runtime estimate for this evaluation result.

Returns: A numpy array containing the runtime estimates for each grid section. If no estimates are available,

Noneis returned.

- abstract to_array()[source]¶

Convert the evaluation result raw data to an array representation.

Used to store the results in arbitrary binary file formats after simulation execution.

Returns: The array result representation.

- Return type:

- to_str(grid_coordinates)[source]¶

Convert the evaluation result at the specified grid coordinates to a string representation.

- Parameters:

grid_coordinates (

Sequence[int]) – Coordinates of the grid section to be converted.- Return type:

Returns: The string representation of the evaluation result.

- property grid: Sequence[GridDimensionInfo][source]¶

Paramter grid over which the simulation iterated.